Jaskirat Singh Sudan

I'm a dedicated AI researcher and Master's student at the University of Michigan Dearborn, driven to bridge cutting-edge research and practical applications. With a 3.7 GPA in my AI program, I specialize in computer vision, representation learning, and energy-based approaches to extract robust features across modalities.

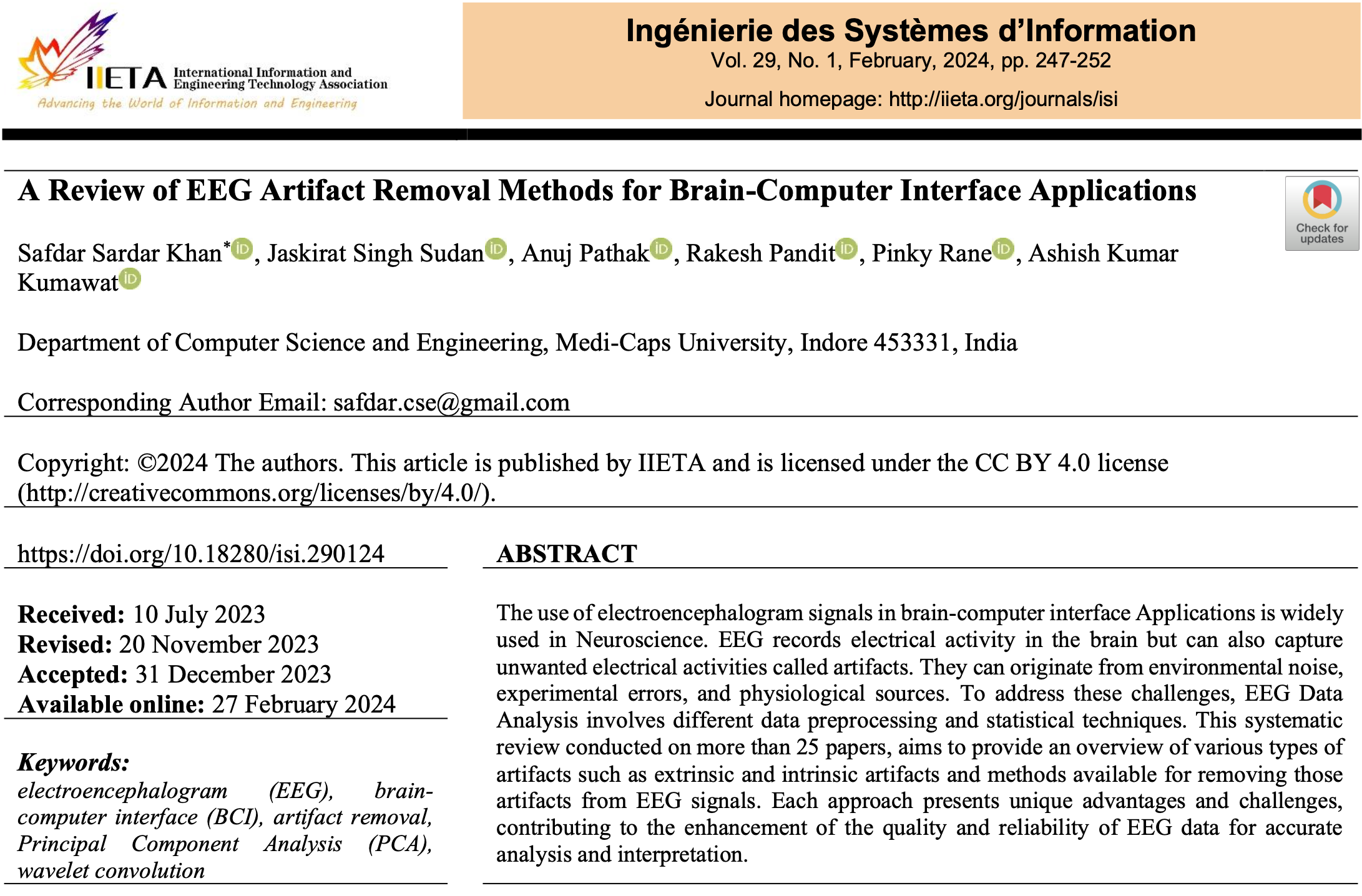

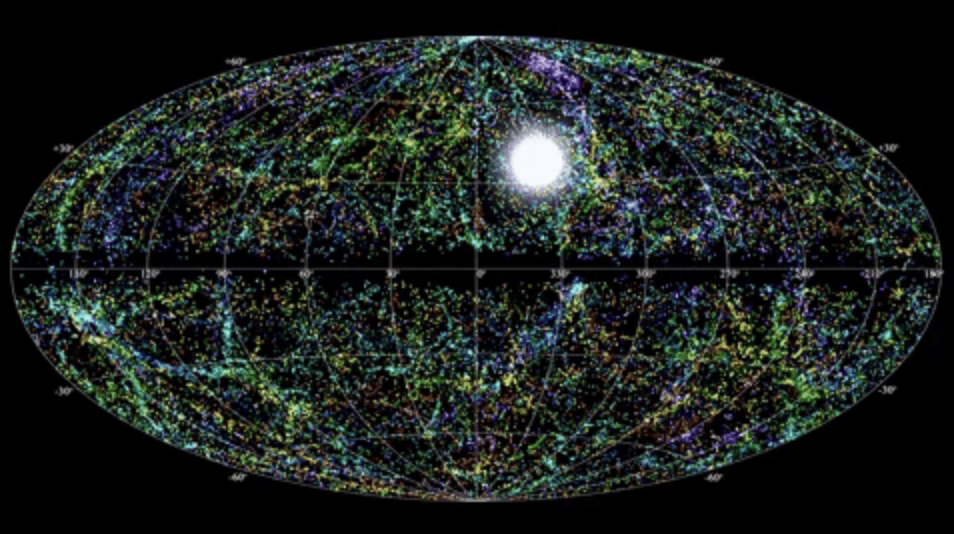

My academic foundation includes a B.Tech in Computer Science from Medi-Caps University (CGPA: 8.12), where I first developed my passion for data-driven solutions. During my undergraduate studies, I led star-based navigation and RF interference mitigation projects at IIT Indore, securing real-world experience in deep learning and signal processing.

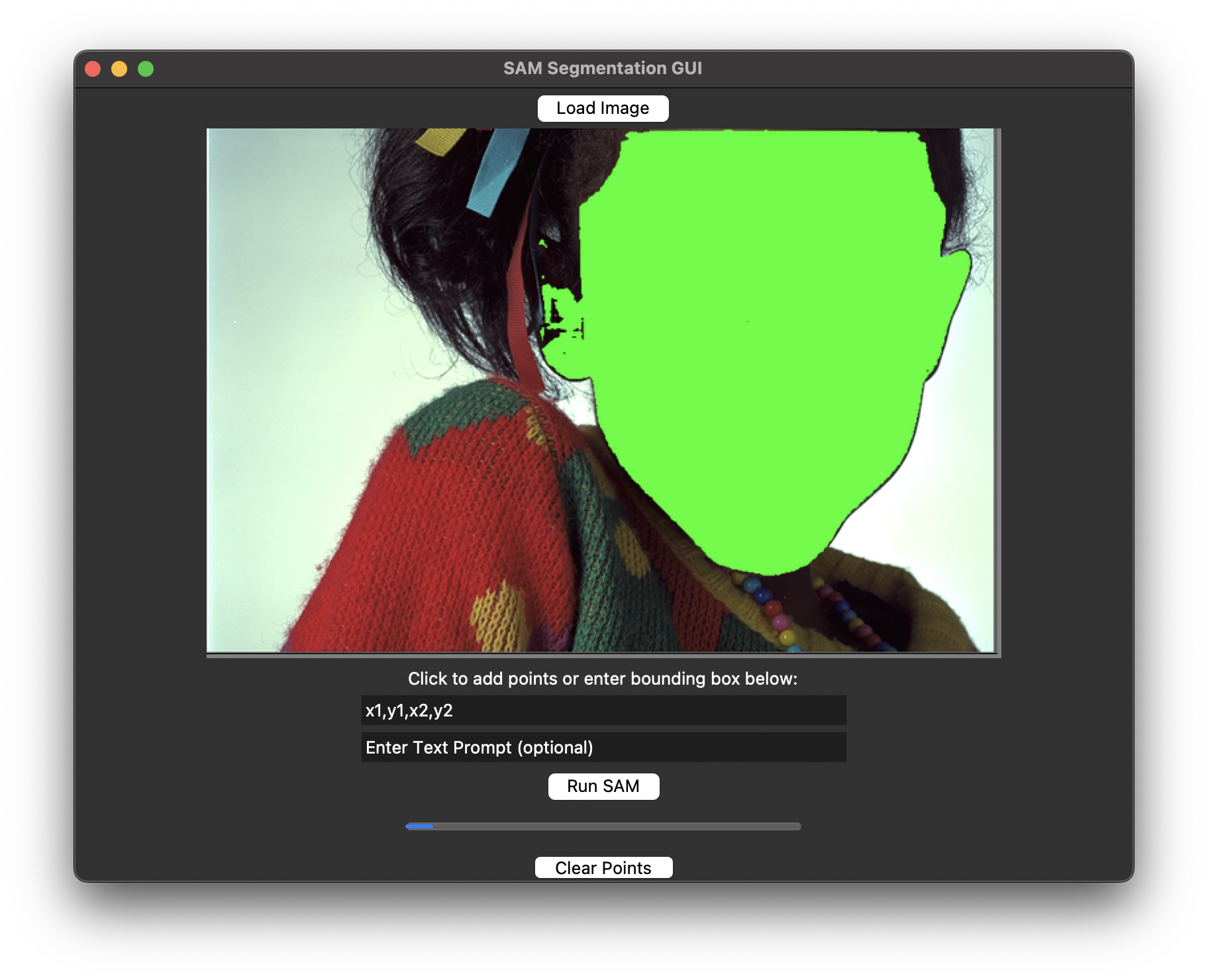

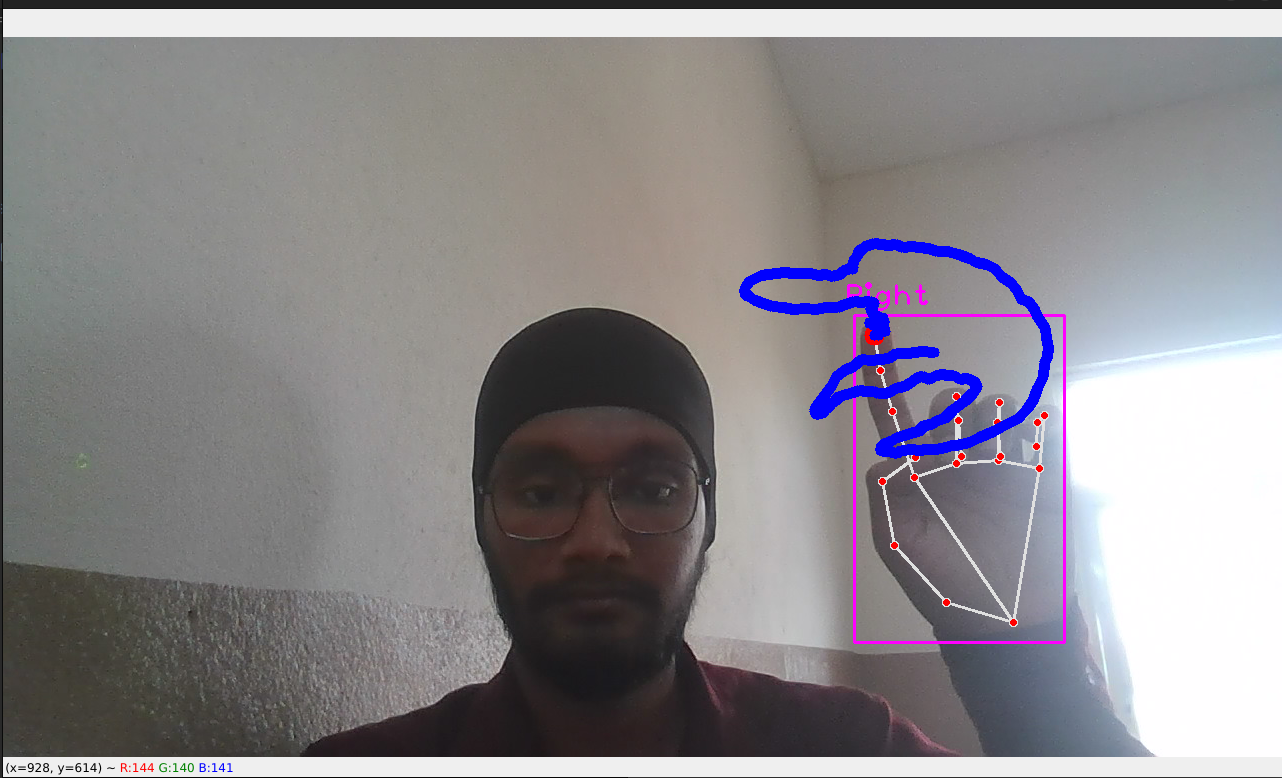

Currently, at UMich TAI Lab, I lead a 5-member team to build an innovative authentication and gesture system using polarized tape patterns on illuminated nails, collaborating closely with Prof. Xiao Zhang.

Connect with me on LinkedIn.